Network Incident Response in the AI Era

Incident reported. Point-in-time traffic snapshot created. PCAP exported. Anomalies detected. Rogue pods identified. TLS payloads decrypted. Forensic report generated. Evidence uploaded to cloud storage for compliance. Seven prompts. One AI agent. Under five minutes.

"We Have Reports of a Possible Breach Yesterday at 5:23 PM"

The security team flags it the next morning. Anomalous outbound connections. Pods communicating with external IPs that don't match any known service. Timestamp: yesterday, 5:23 PM.

By the time you hear about it, it's been 16 hours. The pods may have restarted. Nodes may have scaled. Logs may have rotated. In a traditional setup, the evidence is already gone.

But your Kubernetes cluster has been running Kubeshark — silently recording every packet for your business-critical workloads, 24/7. The raw data from yesterday at 5:23 PM is still sitting in the buffer. Waiting.

You chat with your favorite AI agent or assistant like Claude Code, Cursor, and any MCP-compatible AI tool.

Prompt 1

You: "Create a snapshot from yesterday 5 PM to 6 PM. Name it."

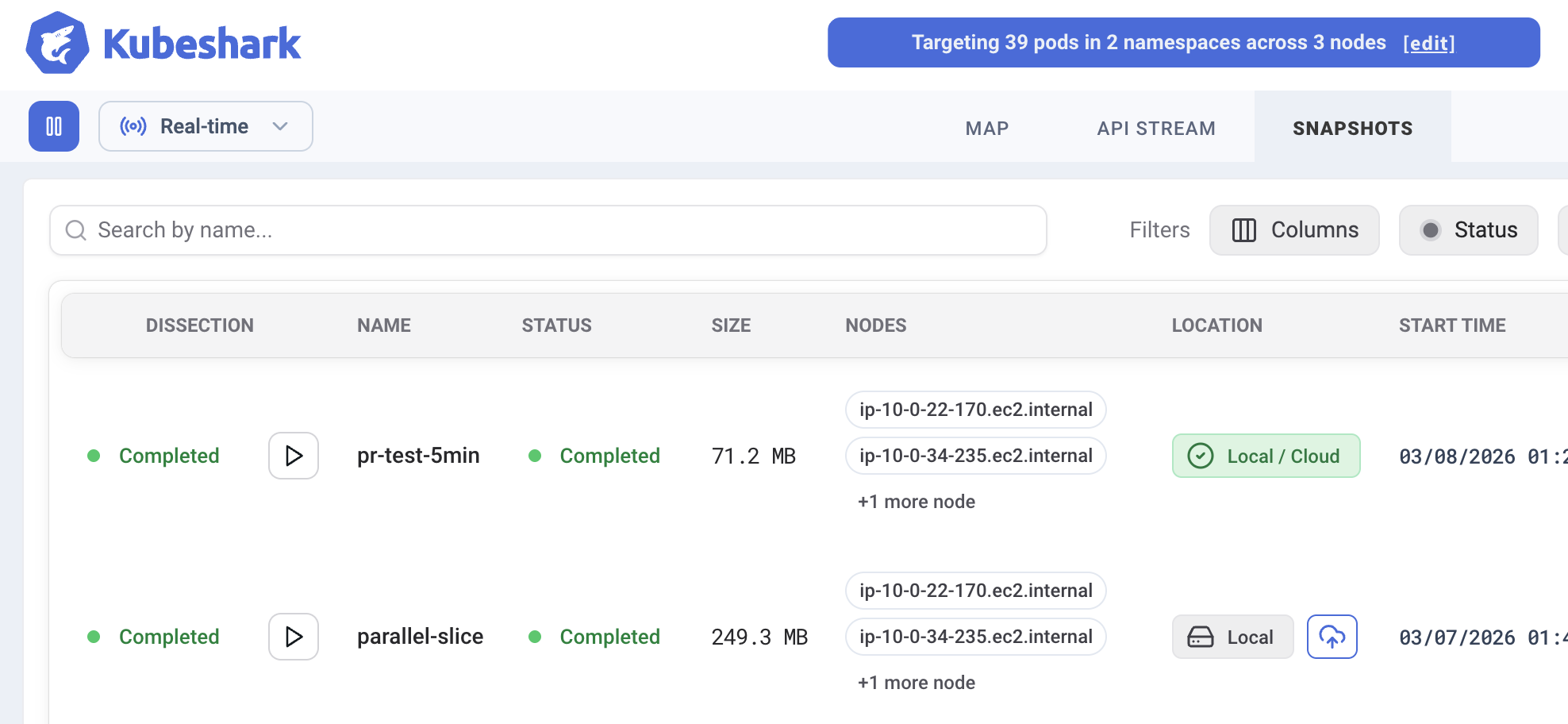

Agent: 2.8 GB of raw packet data across 3 nodes — frozen in eight seconds. Every TCP and UDP packet for the targeted workloads during that hour, locked into an immutable snapshot. Buffer rotation, pod restarts, node scaling — none of it can touch this data now.

Prompt 2

You: "Download the PCAP."

Agent: 2.6 GB. 74 PCAP files from 3 nodes, merged into one. A single Wireshark-compatible file containing the complete network evidence from the one-hour snapshot window.

Prompt 3

You: "Scan the PCAP. What's the busiest IP? Anything unusual?"

Agent: 5,301,288 packets. 2.6 GB. 9 L7 protocols detected. The anomalies surface immediately:

The Google IPs jump out. They weren't in any previous capture. Something new is calling home from inside the cluster.

Under the hood, the agent ran a full-spectrum tshark analysis — IP endpoints, protocol hierarchy, HTTP status codes, DNS statistics, TCP anomalies, external connections — all in parallel.

Prompt 4

You: "Get the L7 entries for these IPs."

Agent: The decrypted HTTPS payload — the actual content of an encrypted TLS session:

There it is.

A pod called mizutest-websocket-client — not part of the application — is running curl inside a container, sending JSON data to www.google.com over TLS. Google rejected it. But against a real C2 server, this works silently.

The PCAP told us that traffic happened. Kubeshark's L7 dissection — powered by eBPF TLS interception — tells us what was said. The encrypted session was intercepted at the kernel level, no private keys needed, full request body captured.

The agent keeps pulling. Two more rogue pods emerge:

All three pods: deployed in sock-shop namespace. All labeled version: v0.1.0. All using the mizutest- prefix. None are part of the Sock Shop application.

Same actor. Coordinated deployment. Hiding among legitimate workloads.

Prompt 5

You: "Create a PCAP with only the malicious traffic. Use BPF."

Agent: 2.6 GB → 85 MB. 97% noise eliminated. Only the attacker's packets come through. The agent constructed a BPF filter targeting all six malicious IPs, and Kubeshark applied it server-side during export — no need to download the full capture and filter locally. 85 MB of pure evidence, ready for Wireshark, your security team, or legal.

Prompt 6

You: "Create an HTML report with everything you found."

Agent:

Here's the HTML report you've requested:

Prompt 7

You: "Upload the snapshot to cloud storage for long-term retention and compliance."

Agent: Snapshot uploaded to S3-compatible cloud storage — immutable, timestamped, compliance-ready. Frameworks like PCI DSS, SOC 2, HIPAA, and NIST 800-53 require organizations to retain network forensic evidence for months or years. The agent checks cloud storage availability, initiates an async upload, and monitors the job to completion.

The snapshot is now preserved in cloud storage — available for future forensic review, audit, or legal proceedings. The evidence chain is complete: from live traffic to permanent archive, without leaving the conversation.

Seven Prompts. That's It.

Here's every prompt that drove the investigation:

No SSH. No kubectl exec. No tcpdump. No manual Wireshark filtering.

Under five minutes from "someone hacked my system" to a forensic report identifying three rogue pods, their processes, their container IDs, their decrypted TLS payloads, and their external destinations.

What Made This Possible

Three things came together to turn a panicked Slack message into a resolved incident in under five minutes:

Continuous Raw Capture

Kubeshark records every packet at the kernel level using eBPF — continuously, for your targeted workloads, with minimal configuration. When the breach happened, the evidence was already in the buffer. The agent simply asked for a snapshot, and the data was preserved before it could rotate out.

L7 Visibility Through TLS

Most network tools stop at "IP A talked to IP B on port 443." Kubeshark goes further — intercepting TLS sessions via eBPF at the kernel level, without needing private keys. The agent didn't just see that a pod connected to Google. It saw the HTTP method, the headers, the JSON body, the User-Agent string, the response code. That's the difference between "suspicious connection detected" and "pod mizutest-websocket-client, process curl, PID 46, container d61c03bbca3c, sent {"key": "value"} to www.google.com via TLS-encrypted HTTPS."

AI + MCP = Natural Language Forensics

The MCP server gives the AI agent direct access to Kubeshark's capabilities — snapshot creation, PCAP export with BPF filters, L7 API queries with KFL filters, L4 flow analysis. The agent combines these server-side tools with local analysis (tshark for PCAP scanning) to build a complete picture. You describe what you want. The agent figures out how to get it.

Your Toolkit

Every capability used in this investigation:

Try It

The next time someone says "something's off" — don't reach for kubectl. Start a conversation.

"Create a snapshot for the last hour. Download the PCAP. Scan for anomalies. Show me the L7 payloads for any suspicious IPs. Create a filtered PCAP with just the evidence. Generate a report."

Your AI agent will do the rest.

Kubeshark provides continuous cluster-wide traffic recording, immutable snapshots, and AI-powered forensic analysis via MCP. Get started →